Written by Dr Shalen Sehgal | Crises Control CEO

At 9:15 PM on July 14, 2023, a series of explosions and fires tore through the Glycol II unit at Dow Chemical’s Louisiana Operations facility in Plaquemine. Five bullet tanks were feeding a fire visible for miles. Local emergency officials issued a shelter-in-place for approximately 350 households within a half-mile radius. The Louisiana Department of Environmental Quality arrived on scene roughly 90 minutes after the blast.

The cause, established by the US Chemical Safety and Hazard Investigation Board in its final report published February 2026, was not sabotage. It was portable work lights left inside a reflux drum during maintenance work in May 2023. Over two months of normal operation, the lights degraded. Metal debris punctured a rupture disc, allowing ethylene oxide to mix with air and ignite. More than 31,000 pounds of ethylene oxide, a known carcinogen, were released before the fires were fully extinguished in the early hours of July 16.

The CSB’s chairperson said it plainly: the incident was entirely preventable.

What the Dow Plaquemine case illustrates is not unique to that facility or that industry. It is the gap between how manufacturing incident response is designed to work and how it actually unfolds under real conditions, with real people, under real pressure. The plan says one thing. The incident does another.

This post walks through each phase of a manufacturing incident response as it actually plays out in practice, the breakdowns at each stage, and what changes when structured incident management replaces improvisation.

The Gap Between the Plan and What Actually Happens

Most manufacturing facilities have incident response documentation. Emergency response plans, site safety procedures, OSHA-mandated processes under 29 CFR 1910.119 for facilities handling highly hazardous chemicals. The documentation exists. The gap is not in the writing.

The gap is in execution under pressure. OSHA’s own incident investigation guidance notes a failure mode that appears in almost every post-incident review: organisations conclude that carelessness or failure to follow a procedure was the cause, when the deeper truth is that the infrastructure for executing the procedure was never in place. The plan assumed conditions that did not exist.

Manufacturing recorded 220,000 workplace injuries in 2024 (OSHA/BLS data), with an injury rate of 2.8 per 100 full-time equivalent workers. Siemens’ True Cost of Downtime 2024 report puts the average cost of unplanned downtime in the automotive sector at $2.3 million per hour. The incidents are happening. The question is whether the response infrastructure is built to match them.

What follows is a phase-by-phase account of what manufacturing incident response looks like in real facilities, and where the breakdowns consistently occur. Not the worst-case scenarios. The typical ones.

Phase 1: Detection — What Triggers the Response, and What Does Not

Detection in manufacturing takes two forms. The first is automated: a sensor trips, a SCADA alarm fires, a pressure threshold is exceeded and the system logs it. The second is human: an operator notices something wrong, smells something, sees a change in the process that the system has not yet registered.

Both forms fail in ways the plan rarely anticipates.

Automated detection produces alerts. Facilities with high alert volumes develop informal triage habits where operators assess severity independently before escalating. The alert that requires immediate escalation enters the same informal assessment process as routine alerts. In the Dow Plaquemine case, the degradation of the portable lights inside the reflux drum had been happening for two months. The system detected the rupture disc failure only at the moment of failure, not during the accumulation of conditions that made it inevitable.

Human detection carries its own failure mode. The operator who first notices the anomaly may not be the person with authority to declare an incident. In a facility with complex shift patterns, that operator has to decide whether to report informally to a supervisor, enter it into the system, or handle it themselves first. Each decision point introduces delay.

The interval between detection and the first coordinated action determines whether an incident stays contained or escalates. The delay is almost never caused by slow sensors. It is caused by unclear ownership of what happens when the sensor fires.

Phase 2: First Response — Who Shows Up, Who Does Not, and Who Does Not Know

When the alert fires or the incident is reported, the response begins. In theory, the emergency response team is notified, roles are clear, and the first actions are underway within minutes. In practice, this is where the most consistent failures occur.

Phone trees are the most common first-response mechanism in manufacturing facilities without structured incident management. They fail in predictable ways: the person at the top is unavailable, the message changes as it travels down the chain, and nobody has a complete picture of who has been notified and who has not.

A 2023 ARC Advisory Group study found that human error from manual processes contributes to 30 percent of manufacturing downtime. Much of that manual process happens in the first fifteen minutes of an incident, when someone is trying to reach the right people through informal channels while also managing a live situation. See also: why manufacturing incident response fails in the first 10 minutes.

The shift handover problem compounds this. If the incident occurs in the first minutes of a new shift, the incoming team may not know the status of equipment or process that just triggered the alert. The Dow Plaquemine investigation found that work lights had been left in the drum during a May 2023 maintenance turnaround and that the unit had subsequently been restarted. The conditions that led to the explosion accumulated silently across multiple shifts.

In a manufacturing incident, the question is not whether the right people exist. They do. The question is whether they are reached in the right sequence, through channels that actually work at that hour, with instructions clear enough to act on without asking a clarifying question.

Phase 3: Escalation — Where Information Fragments

Once first responders are on scene, the incident enters its coordination phase. Multiple teams are now involved: operations, maintenance, safety, environmental, sometimes security. Leadership is trying to establish what is happening. External parties may need to be contacted.

This is where information fragments.

The operations team has one picture of the incident. Maintenance has a different one. Safety is working from what it was told, not from direct observation. Leadership is receiving updates from several sources simultaneously, each with partial information, each slightly out of date.

In the Iberville Parish response to the Dow Plaquemine explosion, the shelter-in-place was issued, managed, and lifted over a period of roughly eight hours. That coordination happened through separate channels across local law enforcement, state environmental agencies, site emergency teams, and approximately 350 households, with no single operational picture that all parties shared simultaneously.

Post-incident investigations in manufacturing consistently find the same pattern: the incident was manageable. What was not manageable was the coordination of the response. Decisions were made on incomplete information. Actions were duplicated in some areas and missed entirely in others. The incident log, when it was eventually compiled, did not match the sequence of events that witnesses described.

Phase 4: Containment — Stopping the Incident From Expanding

Containment means different things depending on the incident type. A chemical release requires different actions than an equipment failure, a fire, or a cyberattack on operational technology. What all containment actions share is a requirement for role clarity. The right team executes the right actions in the right sequence.

In practice, the containment phase is where role ambiguity causes the most damage. When nobody is explicitly assigned to a task, the task does not get done. When multiple people assume ownership of the same task, it is done two or three times while something else goes untouched.

The KMCO facility in Crosby, Texas, experienced an explosion on April 2, 2019, that ultimately led to the company’s bankruptcy in May 2020. The CSB’s final report, published December 2023, identified a y-strainer failure in the isobutylene system as the proximate cause. Pumps that could only be started or stopped by operators in the field, not remotely, were central to the operational conditions at the time of the incident. Coordination of who needed to be where, doing what, in what order, was the operational challenge.

Manufacturing facilities that use role-based task assignment rather than name-based assignment report significantly better containment outcomes. When the person named in the plan is unavailable, as they frequently are in 24/7 shift operations, role-based assignment routes the task to whoever currently holds that role on the active shift. No gaps. No assumptions. No delay from discovering the plan named someone who handed over an hour ago.

Phase 5: Documentation — The Record That Does Not Exist

The post-incident documentation requirement in manufacturing is extensive. OSHA’s recordkeeping requirements under 29 CFR 1904 mandate accurate records of work-related injuries and illnesses. PSM facilities under 29 CFR 1910.119 must document incident investigations and corrective actions. The EPA’s Risk Management Program imposes parallel obligations. Regulators and investigators evaluate the incident on what the documentation shows, not what the facility says.

In most manufacturing facilities, the incident log is compiled after the fact, from memory, phone call records, and whatever informal notes people took during the response. This creates three problems. First, the timeline is usually inaccurate: people remember the sequence of events differently. Second, actions taken but not recorded are invisible to the investigation. Third, the facility cannot demonstrate compliance with notification timelines it may have met but cannot prove.

The Dow Plaquemine investigation found that the incident was entirely preventable. The lessons it identified, about vessel closure practices, foreign materials exclusion, and inserting system monitoring, are credible because the investigation had access to accurate process data and maintenance records. Facilities where that documentation is incomplete produce investigations that miss the actual root cause.

Interested in our Incident Management Software?

Flexible Incident Management Software to keep you connected and in control.

What Structured Incident Management Changes Across Every Phase

The gaps identified above share a common cause. They are not failures of competence. They are failures of infrastructure. Structured incident management addresses each failure mode directly.

At detection, integration with SCADA and process monitoring systems means that when a sensor threshold is crossed, an automated notification cascade fires immediately to designated roles. Nobody decides whether to report it. Nobody works through a phone tree.

At first response, role-based task assignment routes actions to whoever currently holds the relevant role on the active shift. The production supervisor who left an hour ago does not stall the response.

At escalation and coordination, a live incident dashboard gives every participant the same operational picture simultaneously. The site manager, the safety lead, the operations team, and external agencies all see the same status in real time. Parallel conversations fragment because nobody has central visibility. Central visibility eliminates the need for parallel conversations.

At containment, task assignment with acknowledgement tracking means the incident commander can see what has been completed, what is in progress, and what has not been acknowledged. Nothing is assumed done. Every gap is visible the moment it appears.

At documentation, the audit trail is automatic. Every alert sent, every acknowledgement received, every task assigned and completed, every escalation triggered, all time-stamped and stored as the incident unfolds. The compliance record exists by the time the incident closes. It is a live record of what actually happened, not an assembly from memory two days later.

How Crises Control Supports Manufacturing Incident Response

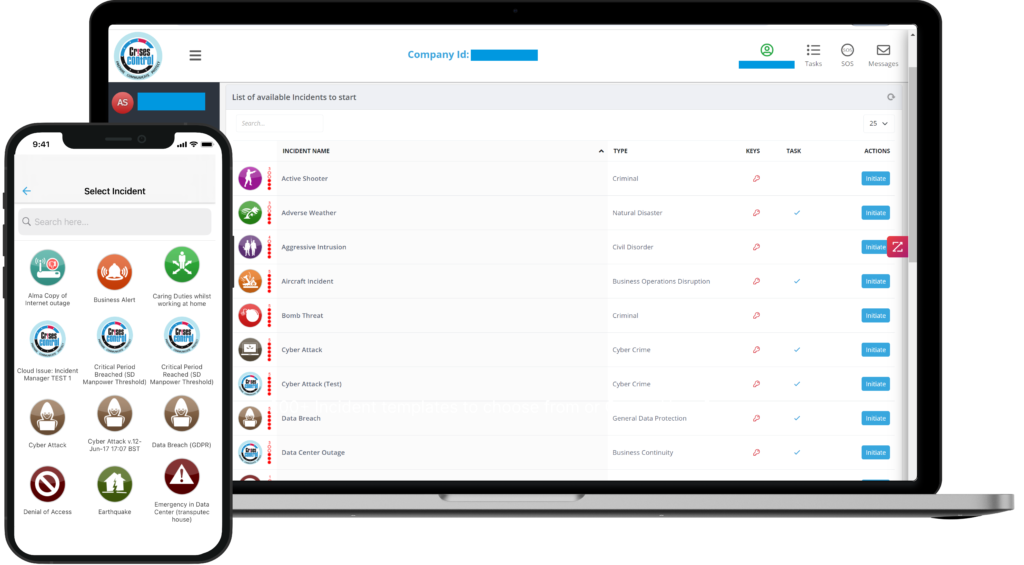

Crises Control is built for the specific conditions of manufacturing incident response: distributed teams, 24/7 shift patterns, multi-site operations, and regulatory documentation requirements that do not pause because the response was complex.

The platform delivers multi-channel mass notification via SMS, voice, push, email, and Microsoft Teams simultaneously. Alerts reach shift workers on the floor, supervisors in the office, and managers off-site through the channels they will actually receive at that hour.

Task assignment is role-based. Predefined incident workflows activate on declaration. Each step is assigned, tracked, and escalated automatically if not acknowledged within a configured window. The live incident dashboard gives decision-makers a complete operational picture in real time, on any device.

The audit trail is automatic and complete, supporting OSHA recordkeeping, PSM documentation, and ISO 45001 compliance without additional effort from a team already managing a live situation. The platform runs on its own cloud infrastructure, separate from production systems and corporate IT.

To see how this works against the specific incident types your facility faces, request a personalised demo.

FAQs

1. What is manufacturing incident response?

Manufacturing incident response is the structured process by which a facility detects, coordinates, contains, and documents an unplanned event affecting operations, safety, or the environment. It covers equipment failures, chemical releases, fires, power outages, and cyberattacks on operational technology. An effective response process assigns clear ownership to every action, reaches the right people through reliable channels, maintains a live operational picture for decision-makers, and produces a complete audit trail for regulatory compliance.

2. Why does manufacturing incident response fail in the detection phase?

Detection failures in manufacturing are usually not sensor failures. They are prioritisation failures. Facilities with high alert volumes develop informal triage habits where operators assess severity independently before escalating. The alert that requires immediate escalation enters the same process as routine alerts. The other common failure is that conditions leading to an incident accumulate over time without triggering any alert, becoming visible only at the moment of failure.

3.What is the difference between an incident response plan and incident management software?

An incident response plan is a document describing what should happen during an incident. Incident management software is the infrastructure that executes the plan in real time. Plans fail not because they are written incorrectly but because the conditions they assume, available contacts, reliable channels, clear ownership, manual logging, do not exist under real incident pressure. Software that assigns tasks automatically, routes alerts to active shift roles, tracks acknowledgements, and logs every action makes the plan executable rather than aspirational.

4. What documentation does OSHA require after a manufacturing incident?

OSHA’s recordkeeping requirements under 29 CFR 1904 require accurate records of work-related injuries and illnesses, including the timeline, nature of the event, and response taken. Facilities subject to Process Safety Management under 29 CFR 1910.119 must document incident investigations, root causes, and corrective actions. The EPA’s Risk Management Program imposes parallel requirements. Incident management software that logs every notification, task assignment, acknowledgement, and escalation with timestamps provides this documentation automatically.

5How does shift handover affect manufacturing incident response?

Shift handover is consistently identified as a high-risk point in manufacturing. When an incident occurs shortly after a shift change, the incoming team may lack context about equipment state, active maintenance work, or process deviations the outgoing team was managing. This gap creates delayed first response and containment errors as teams make decisions based on outdated assumptions. Structured incident management platforms address this by making any active incident, open maintenance task, or flagged safety condition automatically visible to the incoming shift from the moment they log on.